Nvidia’s Groq 3 LPU and the Shifting AI Landscape

Nvidia’s latest innovation in artificial intelligence, the Groq 3 Language Processing Unit (LPU), has introduced a new dimension to the global AI inference arms race. This development, unveiled at the company’s annual artificial intelligence conference, highlights how the growing market for AI agents like OpenClaw is reshaping the landscape for China’s semiconductor industry.

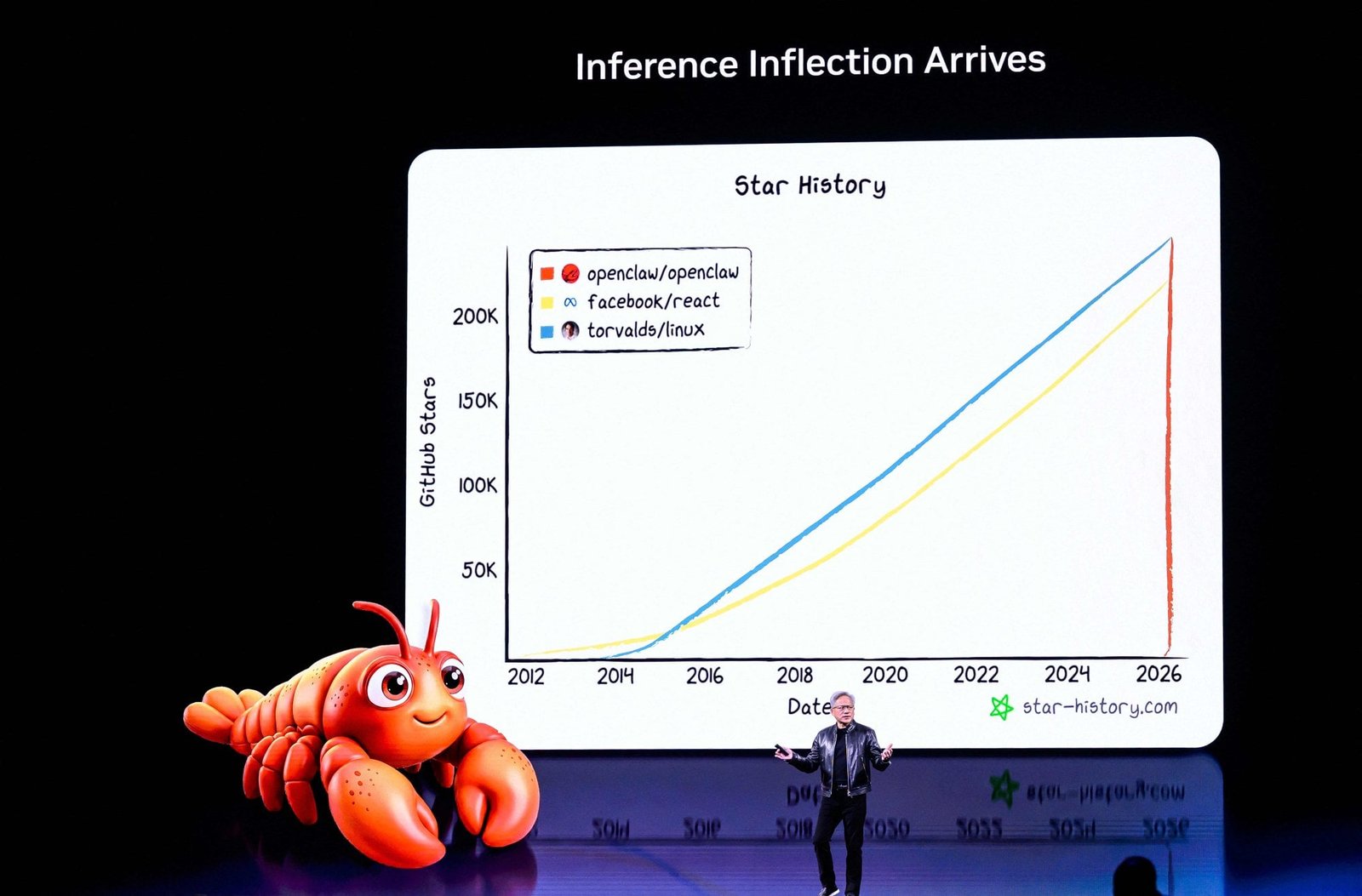

The Groq 3 LPU, launched on Monday at GTC 2026 in San Jose, California, is described by Nvidia as an accelerator with fast memory and low latency. It is specifically designed for agentic systems, which can perform real tasks and rely on inference workloads as their fuel. By integrating this chip into the Vera Rubin platform, Nvidia is shifting from selling individual chips to offering “AI factories” — racks where central processing units (CPUs), graphics processing units (GPUs), and LPUs function together to “open the next frontier of agentic AI.”

This move signals a broader strategy by Nvidia to provide comprehensive solutions rather than just hardware components. The Vera Rubin computing platform was introduced at GTC, marking a significant step in the evolution of AI infrastructure.

A Widening Gap and New Opportunities

Analysts note that the gap between Nvidia and its Chinese rivals is indeed widening, evolving from individual chip performance to system-level dominance. Arisa Liu, chief director and research fellow at Taiwan Industry Economics Services, highlighted that Chinese domestic chips now face a lag not merely in hardware specifications but in the standardization of the entire AI production pipeline.

However, the fragmentation of the AI inference market has created opportunities for Chinese chipmakers. Liu pointed out that not all AI workloads will run in data centers, suggesting that Chinese firms could focus on cost-effective breakthroughs in vertical fields involving models with hundreds of billions or tens of billions of parameters.

This shift indicates that Chinese chipmakers are no longer focused on building the most powerful GPU but are instead developing accelerators that best understand Chinese business scenarios. This approach allows them to target specific markets where they can offer tailored solutions.

Strategic Moves by Chinese Tech Giants

Chinese AI giant Baidu, which announced plans to spin off its Kunlunxin chip unit for a Hong Kong listing, has also recognized the potential of the larger inference market. Baidu’s CFO, Henry He, noted that Nvidia’s bet on AI inference, along with Baidu’s own efforts, has elevated the importance of inference architecture.

Kunlunxin is known for the M100 chip, designed for large-scale inference optimization. Other Chinese firms, including Huawei Technologies and Cambricon Technologies, have also launched their own inference chips, indicating a growing presence in the market.

Benefits for Chinese Component Makers

While Chinese chip designers struggle to match Nvidia’s pace, component makers within the Nvidia supplier chain are set to benefit. Nvidia CEO Jensen Huang’s projection of US$1 trillion in chip revenue through 2027 could provide a boost for companies like Wus Printed Circuit, a leading printed circuit board (PCB) maker, and Sichuan Em Technology, a chemical material provider.

As Nvidia’s new LPU kicks off “a new PCB cycle,” Wus could play a key role. Kuo Ming-chi, an analyst at TF International Securities, noted that Wus is the primary supplier of 52-layer PCBs for Nvidia’s LPU. This positions the Chinese firm well to benefit from Nvidia’s upcoming AI server architectures.

Conclusion

The introduction of the Groq 3 LPU underscores Nvidia’s continued leadership in the AI sector while presenting both challenges and opportunities for Chinese firms. As the market evolves, the focus on specialized solutions and strategic partnerships may help Chinese companies carve out a niche in the competitive AI landscape.